The landscape of AI is evolving rapidly, and enterprises need solutions that can keep pace. That’s why Dell Technologies and NVIDIA are expanding their collaboration to offer a comprehensive approach to AI infrastructure management at scale, leveraging Dell’s industry-leading infrastructure and the orchestration capabilities of the NVIDIA Run:ai platform. Together, the solution delivers a unified solution to streamline the deployment and management of AI systems. Here’s what you need to know.

Meeting the Challenges of AI Infrastructure

Deploying AI at scale comes with unique challenges. Enterprises often struggle with underutilized resources, complex GPU management, and the need to align AI operations with dynamic business goals. The NVIDIA Run:ai orchestration platform helps address these issues by enabling efficient sharing and scheduling of GPU resources, all while supporting the entire AI lifecycle—from model development and customization to large-scale training and inference.

When paired with Dell’s comprehensive ecosystem, including the Dell AI Factory with NVIDIA, this collaboration provides organizations with a simplified, scalable solution for AI innovation.

A Game-Changing Collaboration

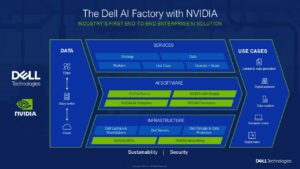

At the heart of this collaboration is the Dell AI Factory with NVIDIA, an end-to-end solution that helps ensure customers have everything needed for AI success: AI infrastructure, AI software and models, services, and now advanced orchestration capabilities for AI development and deployment. The integration of the NVIDIA Run:ai platform into this ecosystem amplifies its power by ensuring that resources are utilized efficiently. Whether scaling up workloads or optimizing existing infrastructure, enterprises can now do so without adding unnecessary complexity.

Unveiling the Architecture

To fully grasp the benefits of this collaboration, let’s take a closer look at the architecture that powers it. The Dell AI Factory with NVIDIA combines compute, storage and networking with NVIDIA AI Enterprise software and can include the NVIDIA Run:ai orchestration platform. The architecture ensures seamless resource allocation, scalability, and agility, enabling enterprises to deploy AI at scale efficiently.

This highlights how Dell Technologies and the NVIDIA Run:ai platform work together to address the complexities of AI infrastructure management. With the combination of GPU fractioning, policy-driven resource management, and lifecycle support, enterprises gain unparalleled control over their AI operations.

Key Benefits of NVIDIA Run:ai Integration

The NVIDIA Run:ai orchestration platform offers several transformative benefits for enterprises:

- Dynamic Allocation of GPU Resources: Meet fluctuating workload demands efficiently without the need for costly hardware expansions.

- AI Lifecycle Integration: From development to deployment, the platform supports every phase of AI workflows.

- Centralized Management: Unified resource pooling across on-premises, cloud, and hybrid environments simplifies operations.

- Policy-Driven Orchestration: Align AI resource management with strategic business objectives through governance and security integration.

- Enhanced GPU Utilization: Maximize existing infrastructure, ensuring every GPU delivers optimal performance

A Future-Proof Solution

The Dell Technologies and NVIDIA collaboration represents a forward-thinking approach to AI infrastructure management. By combining advanced hardware, NVIDIA AI Enterprise software, and the NVIDIA Run:ai platform’s orchestration capabilities, enterprises can focus on what matters most: delivering impactful AI solutions.

With this expanded collaboration, Dell Technologies and NVIDIA are simplifying AI at scale, empowering enterprises to navigate the complexities of AI with confidence. Whether you’re launching new AI initiatives or scaling existing operations, this collaboration offers the infrastructure, software, and services to turn great ideas into great results.

To learn how to maximize time to value by optimizing AI workloads on Dell AI Factory with NVIDIA and Run:ai, refer to our most recent Dell Reference Design (DRD). You can also contact your Dell account executive to explore Dell, Run.ai and the Dell AI Factory with NVIDIA for your data needs.